Interview with Camille Domange, lawyer at the Paris Bar and founder of CDO AVOCAT, a firm dedicated to the creative, digital and innovation sectors, to better understand the challenges of artificial intelligence in the music sector.

CamilleWith so many people talking about artificial intelligence every day, how do you define the term?

When we talk about an artificial intelligence system, we're referring to a machine-based system, designed to operate with different levels of autonomy, and which can generate results. So there isn't just one kind of artificial intelligence, but several. Midjourney, ChatGPT and DALL-E are good examples.

How do artificial intelligence systems like Midjourney or ChatGPT work?

These generative intelligence systems generate what is known in computer language as "output" data. To obtain this output data, artificial intelligence systems dismantle, as it were, pre-existing data they have previously ingested in order to analyze it and extract trends, or break down pre-existing data into elementary and common characteristics in order to turn them into technically exploitable data, and then recompose them through their generative faculties into new content. The point to bear in mind is that pre-existing data ingested by these artificial intelligence systems may be subject to special protection regimes. I'm thinking here of personal data, works protected by copyright, neighboring rights or data protected by personality rights, such as a person's image or voice.

In the music sector, this can quickly become complicated, given the number of players involved...

Music law is complex. We need to take into account publishing, composition and recording rights, as well as the moral rights of composers and artists. In this context, artificial intelligence systems come up against the full force of authors' and artists' rights... We need to be aware that the greater the amount of pre-existing data ingested by artificial intelligence systems, the less chance there is of perceiving, of detecting, the slightest element of the original creation in the result generated. This raises the question of proof.

As you can see from these artificial intelligence systems, it's possible to generate content in the style of an author or artist. Can a creative style be protected by copyright?

There's an adage in copyright law with which you're no doubt familiar: even ideas marked by the corner of genius are freewheeling and therefore not protectable as such by copyright law. So, for example, borrowing an author's or artist's style, or making a creation "in the manner of", would not require prior authorization from the rightful owners. It's quite illusory to think that anyone can be prevented from doing with machines what they used to do before, i.e. drawing inspiration from what others do.

So what can be done to protect these creations?

We need to be able to act upstream and control what is used by artificial intelligence systems in order to function. This raises the whole question of the transparency of the data used by artificial intelligence systems.

This is what SACEM by exercising its opt-out right for the authors in its catalog, right?

That's right. This opt-out right was introduced as part of a European directive of April 17, 2019 on copyright and related rights in the digital single market. This directive provides for an exception to copyright relating to text and data mining, known as the "TDM" exception. This exception mechanism finds a concrete application with artificial intelligencetraining systems, which ingest large amounts of data. It should be remembered, however, that exception mechanisms are subject to a specific framework, which may not have the effect of undermining the normal exploitation of the work, nor cause unjustified prejudice to the legitimate interests of rights holders. Legitimizing web scraping on the basis of this exception is therefore legally questionable.

How does this opt-out right work?

In order to strike a balance with authors' rights, this opt-out right has been established for the benefit of rights holders, who can use it to express explicitly, by machine-readable means, that they do not wish their copyrighted data to be searched and integrated into artificial intelligence systems. In France, this opt-out right has been transposed into our intellectual property code in a more restrictive way, as it can only be exercised by the authors themselves and, by extension, their representatives. While this opt-out right remains rather unsatisfactory when we consider the way in which data circulates, is shared and is viralized on the networks, we should not overlook this legal basis, which can be a relevant lever in the context of possible actions, and can in this context be exercised as a precautionary measure.

This is a real question, because even with the exercise of this opt-out right, it will be difficult to identify the works used, how they were used and, above all, when they were used... especially before the introduction of this opt-out right.

Artificial intelligence systems have developed into veritable black boxes, so that it is extremely complex to identify the data that has led to or is currently being used. This is why important discussions on data transparency have been launched as part of the trialogue examination (European Parliament, European Council and European Commission) of the future European regulation on artificial intelligence, with the aim of forcing companies developing these artificial intelligence systems to reveal the sources on which they have trained. This subject is far from anecdotal, for beyond the legal implications of greater transparency regarding the sources used, questions of trust and governance are at stake. It would have been desirable for AI suppliers to be able to provide a detailed and continually updated list of the works used by AI systems and their sources. To be effective, this list could have been transmitted to trusted third parties such as collective management bodies or an independent administrative authority.

The political agreement reached on December 8 as part of the future European regulation on artificial intelligence provides for an obligation on artificial intelligence providers to publish a detailed summary of the training data used, as well as the need to clearly inform users that they are interacting with an artificial intelligence. As it stands, this obligation is not very restrictive, as the publication of a simple summary of the data used will not enable right holders in particular to know whether the fruits of their labor have been improperly used.

To whom does this future European regulation on artificial intelligence apply, and what does it provide?

This regulation will apply not only to artificial intelligence technologies developed within the European Union, but also to any operator operating in the European single market. It lays the foundations for a set of European rules to protect the rights of individuals with regard to the use of artificial intelligence. Discussions on this text led to a political agreement on the night of December 8. The European regulation is described by its architects as "historic", and as "a launching pad for European startups and researchers in the global race for artificial intelligence".

This agreement adopts a uniform, technology-neutral definition of artificial intelligence, so that the text is not locked into any particular technology, and can be applied to all future artificial intelligence systems. The text is built around two main pillars.

The first pillar concerns the establishment of common rules for all artificial intelligence systems, such as the need for technical robustness and security, human supervision of artificial intelligence systems, and respect for the principles of confidentiality, diversity and non-discrimination, among other things.

The second pillar concerns more or less restrictive obligations, depending on the degree of risk to individuals' rights that artificial intelligences may generate. The idea is that the greater the risk, the more onerous the obligations weighing on the suppliers of these systems. In this context, a repressive component is planned. Any infringement of the rules laid down by artificial intelligence providers will result in sanctions, with fines ranging from 7.5 million euros or 1.5% of sales to 35 million euros or 7% of worldwide sales, depending on the infringement and the size of the company.

Now that this political agreement has been reached, work will now continue at a more technical level to finalize the details of the future regulation. And as we all know, the devil is in the detail, so not everything has been finalized yet. It is this finalized text that will be submitted to the representatives of the Member States(Coreper) for approval. The approved text will then have to be formally adopted by the European Parliament and Council before the European elections in spring 2024. It has been specified that this European regulation will apply two years after its entry into force, with a few exceptions for specific provisions.

Are we waiting for this European text to come into force to ensure that artificial intelligence issues are regulated?

The future European regulation will be an important tool in regulating the challenges of artificial intelligence, as will other texts currently in progress, but our current legal framework already allows a great deal to be done, and regulatory issues can also be dealt with outside of legislative and regulatory norms.

We had a good example of this in the audiovisual sector across the Atlantic with the screenwriters' strike in the USA, which lasted almost 150 days and led to a protective framework through professional negotiation. In particular, screenwriters wanted restrictions on the use of all or part of their writing work to train AI systems. The Writers Guid of Americathe powerful screenwriters' union, won its case after intense negotiations with studios and producers, and has thus endorsed certain broad principles regarding the use of AI. In France, it might well be possible to regulate practices in the field of artificial intelligence through professional negotiation in certain creative sectors. It would be quite virtuous to have a framework shaped by professionals, by those on the front line. In addition to professional negotiation, regulation can also take the form of corporate governance or contractual agreements between users, AI systems and rights holders, in order to develop AI systems that fully respect the rights of third parties and operate on the basis of qualified data.

Isn't this just wishful thinking?

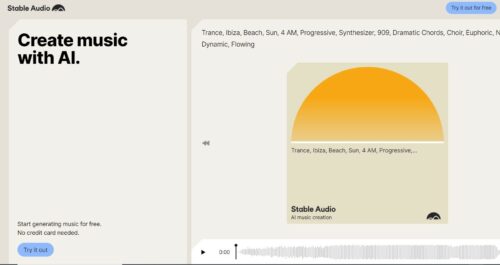

No, we have players who are giving us examples of achievements in this direction. This is what Stability AI is doing in the music sector, for example, with Stable Audio, where the generative AI algorithms developed are based on a catalog of pre-existing musical works for which the authors and performers have given their authorization, and will receive a share of the revenue generated in the event of success of the song generated. This shows that AI systems that respect intellectual property rights can also become levers for giving new life to created works, while at the same time attracting the interest of those who created them. It's a question of sharing value. At our firm, we are increasingly consulted on these issues by market players who are thinking about setting up legal and ethical corporate governance systems to lay down the main principles governing the use of artificial intelligence and the associated consequences.

At the stage of development of artificial intelligences as we know them today, can we really speak of intelligent systems?

AI systems as they are presented are first and foremost computer programs based on data mining. The more precise the prompts written by humans, the more the programs will be able to respond and generate targeted data. So it's mystifying to say that the tool is intelligent, while at the same time erasing the role of the user. In today's AI systems, there is no adaptation to the indeterminate, and that's what intelligence is all about. Intelligence means adapting to an environment, to objectives, and this implies being confronted with data over which we have no control. I'm not sure we can currently say that AI can adapt to its environment without prior rules.

So you're saying that artificial intelligence won't replace human creativity any time soon?

Generative artificial intelligences work on previous creations, on what has been produced in the past by authors and artists, so to speak, they correlate results. It is therefore more a question of generated content than of a creative act as such... but after all, in law, it's all a question of qualification, and it would be entirely conceivable to consider an intelligence system as a simple technical assistance to human creation, which could claim protection under copyright law as shaped by French and European jurisprudence.

However, to say that artificial intelligence will replace creators is not a foregone conclusion. It will play a part in shaping their work, but it cannot replace the creator, his or her power of innovation, his or her power of life, for it is this that drives and summons our emotions when faced with the singularity of a creation. In the field of music, generative AI is quite formidable when it comes to generating and combining sounds, but it cannot, at least for the time being, replace the work of composition and melodic creation. A certified account for a "composer" and "performer" named Anna Indiana, created ex-nihilo by artificial intelligence, has appeared on X. This account shares the work of a "composer" and "performer" named Anna Indiana. The "AI artist's" musical creation is shared on this account. I find this approach quite interesting, as it clearly shows the potential offered by AI, but also its limits in musical terms.

The issue today is to know how authors and artists can use AI in their creative work, and how value can be shared between all those involved in the creative process, in order to guarantee a healthy balance between regulation and innovation. This subject is not covered by the future European regulation, and it is indeed on this crucial subject that the legislator is expected to act.

*See also the press release from the Syndicat français des compositrices et compositeur de musique contemporaine.

)