La sonification – l’utilisation d’un son non verbal afin de transmettre des informations ou de rendre perceptible des données pour reprendre la définition la plus courante – est aujourd’hui présente à peu près partout, autant dans les sciences et les techniques que dans les pratiques artistiques (sonores et autres). Ses premiers usages sont cependant anciens (le principe du compteur Geiger a été imaginé en 1913) et son utilisation dans la musique remonte aux années 1960. En partant de quelques exemples empruntés, pour la plupart, à l’art sonore, nous essaierons de tirer quelques fils dans le labyrinthe de la sonification.

Entre le 5 et le 31 janvier 1969, l’artiste américain Robert Barry participa à une exposition collective organisée par Seth Siegelaub dans deux pièces d’un immeuble new-yorkais. Ce qu’il exposa n’était ni visible ni audible. Le catalogue, disponible dans une des deux pièces, listait le contenu de son installation : une onde ultrasonique de 40 kHz, deux ondes radios (AM et FM, rayonnées par des émetteurs dissimulés dans un placard), des ondes radioactives produites par un échantillon de baryum-133 enterré à Central Park et une onde radio émise depuis un appartement du Bronx à destination d’un radioamateur au Luxembourg.

En 2003, l’artiste allemande Christina Kubisch, après avoir longtemps travaillé sur des dispositifs d’induction électromagnétique, mit au point un casque d’écoute sans fil sensible aux ondes électromagnétiques (grâce à une bobine d’induction). Elle organise depuis lors des marches urbaines au cours desquelles les participant·e·s sont invité·e·s à écouter les ondes non sonores dans lesquelles nous baignons sans le savoir.

Le 5 mai 1965, lors d’un concert organisé par John Cage au Rose Art Museum de l’université de Brandeis, Alvin Lucier présenta Music for Solo Performer, for enormously amplified brain waves and percussion. L’œuvre consistait à amplifier « énormément » les ondes alpha générées par le cerveau du compositeur, de manière à ce que leurs vibrations, transduites par des haut-parleurs, excitent divers instruments à percussion disposés dans la salle de concert. Pendant qu’Alvin Lucier, des électrodes sur les tempes, se concentrait, John Cage, qui jouait le rôle de l’assistant, orchestrait les signaux dans l’espace.

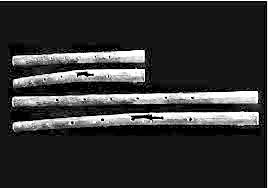

En octobre 2001, l’artiste américain Greg Niemeyer exposa au Kroeber Museum de l’université de Berkeley, en Californie, une installation intitulée Oxygen Flute. Les visiteurs pénétraient dans une chambre où poussaient des bambous et où l’on entendait quatre flûtes, modélisées sur ordinateur, jouer à travers quatre haut-parleurs. Le son des flûtes était dépendant du taux de dioxyde de carbone présent dans la chambre, des capteurs enregistrant sa concentration deux fois par seconde. Les flûtes réagissaient non seulement à la respiration des visiteurs dans la chambre, qui produisait des fluctuations à court terme, mais également à l’évolution lente du taux de dioxyde de carbone du fait de la présence conjointe des humains et des bambous. À l’augmentation du taux pendant les heures de visite répondait le cycle quotidien de la photosynthèse. Les flûtes avaient été modélisées à partir d’instruments en os excavés dans un site néolithique du centre de la Chine. Les données recueillies par les capteurs (le taux instantané de dioxyde de carbone, ses moyennes et son évolution dans le temps) étaient traduites en sons par l’intermédiaire d’un algorithme comprenant six paramètres (souffle, bruit, embouchure, doigté, portamento, sourdine). Dit en une phrase, Oxygen Flute est un environnement musical interactif contrôlé par ordinateur qui rend audible les échanges de gaz entre les visiteurs et l’atmosphère dans laquelle ils sont plongés : une des finalités explicites de l’installation était de nous faire prendre conscience des effets que notre simple présence produit sur notre environnement. Comme le dit Greg Niemeyer : « Our globe is nothing but a much larger container » (« Notre globe n’est rien d’autre qu’un container beaucoup plus grand »). (1)

Sonification vs audification

Robert Barry est sans aucun doute un artiste conceptuel mais les œuvres qu’il expose sont on ne peut plus matérielles. Les ondes que j’ai décrites sont en effet détectables, à condition de disposer des appareils ad hoc, récepteurs d’onde radio, microphone sensible aux ultrasons et compteur Geiger. Ces appareils sonifient, rendent audibles, ce qui ne l’est pas. Il convient cependant de distinguer l’ultrason des ondes radioélectriques et radioactives : la première est sonore mais inaudible par l’oreille humaine, les secondes ne sont pas sonores. On dira (en première approximation) que le microphone audifie et que le récepteur radio et le compteur Geiger sonifient (ce dernier fut un des premiers instruments de mesure à faire usage de la sonification).

Le casque d’écoute augmenté de Christina Kubisch sonifie les ondes électromagnétiques qui le traversent, qu’elles soient d’origine humaine (wi-fi, téléphone portable, champs électriques, systèmes radars, éclairage public, etc.) ou non-humaines (ondes provenant de l’ionosphère, comme celle qu’émettent les orages).

WAVE CATCHER – excerpt from Christina Kubisch on Vimeo.

Music for Solo Performer amplifie et transduit les ondes à très basses fréquences (entre 8 et 12 Hz) émises par le cerveau du compositeur-performeur avant d’en répercuter les vibrations sur les instruments. Elles ne deviennent pas audibles en tant que telles, seulement par leurs effets mécaniques, ce qui est une manière de préserver leur inaudibilité (et donc leur étrangeté) : Alvin Lucier sonifie mais ne rend pas directement audible cette sonification. Il a par ailleurs consacré deux œuvres aux ondes électromagnétiques produites par l’atmosphère terrestre : Whistlers (1966) et Sferics (1981). Il s’agit dans les deux cas d’un travail de sonification à partir d’antennes radioélectriques. Les « whistlers » sont les sifflantes, des ondes radios à très basses fréquences produites, le plus souvent, par la foudre et qui traversent l’ionosphère à la vitesse de la lumière.

Oxygen Flute repose sur une sonification opérée à partir de données atmosphériques. Il ne s’agit pas de rendre audible une onde sonore ou non-sonore mais de traduire un ensemble de données en sons. Ce qui suppose un algorithme et un paramétrage fin (comme on l’a vu). Greg Niemeyer se considère lui-même comme un « data artist ».

Géosonification

Selon la définition la plus courante, la sonification est l’utilisation d’un son non verbal afin de transmettre des informations ou de rendre perceptible des données (c’est ce que nous appellerons la sonification au sens large). Les exemples précédents nous invitent cependant à en distinguer deux formes : la sonification (au sens étroit) et l’audification. Si la seconde est une transduction, la première relèverait plutôt de la traduction.

La transduction peut opérer par degré (par exemple d’un son inaudible à un son audible, ce qui suppose un appareil transducteur) ou par nature (quand on convertit un type d’énergie dans un autre, par exemple d’une onde électromagnétique à une onde sonore), mais, dans tous les cas, son opération est analogique, elle engage un rapport proportionnel et continu entre deux grandeurs. (2)

La sonification, quant à elle, est le passage d’un ordre dans un ordre, de données numériques en sons audibles, son opération est de nature symbolique, elle suppose une traduction et donc un travail d’interprétation et, dans la plupart des cas, de simplification.

Pour parler de son travail, l’artiste américaine Andrea Polli préfère utiliser le terme de géosonification, qui désigne la sonification de données (« data sonification ») provenant du « monde naturel » (« natural world »). (3) Elle inscrit cette pratique dans la continuité de celle du paysage sonore, qui suppose enregistrement et audification. Un des paysages sonores les plus célèbres de Hildegard Westerkamp, Kits Beach Soundwalk, qu’elle composa en 1989, repose sur l’amplification de sons à peine audibles, en l’occurrence ceux de balanes (de petits crustacés qui vivent sur les rochers littoraux) en train de se nourrir. La géosonification permet à Andrea Polli de reconstituer à l’échelle humaine des paysages ou des phénomènes impossibles à embrasser, comme un ensemble de plusieurs centaines d’orages échelonnés sur la côte Est des États-Unis (Atmospherics/Weather Works, 1999-2001, en collaboration avec le météorologue Glenn Van Knowe) ou les conditions atmosphériques et les transformations climatiques au pôle Nord entre 2003 et 2006 (N., 2007, en collaboration avec Joe Gilmore). Dans Atmospherics/Weather Works, elle a utilisé les flux de données numériques générés par les modélisations des orages pour construire les formes et les courbes des sons plutôt que les sons eux-mêmes. Un des avantages de ce paramétrage fut de rendre audible des structures temporelles fines que les météorologues ne pouvaient jusque-là qu’imaginer. (4) La géosonification est aussi pour Andrea Polli une manière de construire un lien avec un environnement ou une situation difficiles à appréhender et de produire les conditions d’une prise de conscience politique.

Musique et trous noirs

La sonification connaît aujourd’hui d’innombrables applications et usages, de l’astronomie à la médecine en passant par la géologie et l’automobile. En 2022, la NASA a mis en ligne la sonification du trou noir massif situé au centre de l’amas de Persée – à partir des ondulations que le trou noir produit dans le gaz chaud qui l’entoure. (5) Un exemple parmi beaucoup d’autres. L’astrophysicien Philippe Zarka, qui s’en est fait une spécialité, a intitulé la page qu’il consacre à ses sonifications sur le site du Laboratoire d’études spatiales « Les chants du Cosmos ». (6) Même s’il ne cherche pas à musicaliser les signaux radios qu’il convertit en sons, sa pratique est la plus littérale possible, le paradigme musical est inséparable de notre imaginaire du Cosmos. Et l’on peut très bien privilégier un paramétrage qui musicalise, comme c’est le cas, par exemple, dans Oxygen Flute. Mais la sonification au sens large (en tant que traduction et transduction) n’est pas à proprement parler un procédé musical. La musique ne sonifie pas. Elle compose sur la base d’un ordre implicite et évolutif, fait d’échelles, de rythmes, de mètres, d’intensités et de timbres instrumentaux. Et s’il va de soi qu’elle exprime et signifie, elle le fait depuis cet ordre qu’elle ne cesse de réinventer et qui est la condition de sa pratique. Comme l’a montré de manière très convaincante Christian Accaoui dans un livre récent, le régime de l’imitation musicale, dominant à l’âge classique, est celui de l’analogie, à mi-chemin entre copie et représentation. (7) L’important est moins la précision de la peinture sonore que la clarté de la référence. Même lorsque la musique imite les bruits de monde, tonnerre, vent et cris animaux, sa mimétique reste vague et volontairement approximative. Imiter, c’est référer. Alors que sonifier, c’est traduire ou transduire.

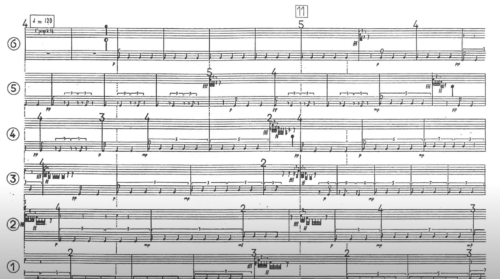

Le Noir de l’Étoile

Un des exemples les plus manifestes de cette différence est sans doute Le Noir de l’Étoile de Gérard Grisey, pour six percussionnistes et sons de pulsars. Lors de sa création le 16 mars 1991 à Bruxelles, une sonification du pulsar 0359-54, dont le signal radio était capté par le radiotélescope de Nançay, fut projetée en direct dans la salle de concert sur douze haut-parleurs disposés autour du public. La pulsation de ce pulsar lent, comme celle, pré-enregistrée, du pulsar du Véla (choisis l’un et l’autre pour la lenteur de leur rotation : leur sonification est un rythme et non une fréquence), demeure jusqu’à la fin de l’œuvre un élément étranger autour duquel les percussions, inlassablement, tournent. Comme l’écrit Gérard Grisey dans la note de programme à propos des sons de pulsar qu’il avait découverts en 1985 à l’université de Berkeley : « Que pourrais-je bien en faire ? (…) les intégrer dans une œuvre musicale sans les manipuler ; les laisser exister simplement, comme des points de repère au sein d’une musique qui en serait en quelque sorte l’écrin ou la scène ». Le régime de notre âge musical « moderne » n’est plus celui de l’analogie, il est celui de la musique pure, c’est-à-dire d’une musique identifiée à la forme et au son en tant qu’ils ont cessé de renvoyer à autre chose qu’eux-mêmes. Et c’est précisément parce que la musique n’imite plus qu’elle doit rendre présente la chose elle-même, en l’occurrence le pulsar. Le Noir de l’Étoile n’en compose pas l’analogie musicale, il le convoque en personne et lui confronte la musique et ses instruments, qu’il contamine sans cesser d’être lui-même, étranger à l’ordre qui l’accueille.

Art sonore

La musique ne sonifie pas mais, comme les exemples pris au début de ce texte le montrent, l’art sonore a fait de la sonification au sens étendu un de ses procédés majeurs. Car, à la différence de la musique, l’art sonore n’est pas dépendant d’un ordre implicite qui pré-différencie les sons (et discrétise le champ sonore), mais des objets – phénomènes, moments, lieux, paysages, organes, etc. – qu’il choisit d’adopter et qu’il traduit-transduit en sons audibles.

Il n’y a pas d’art sonore pur. Il est toujours en rapport avec un non sonore ou non audible qu’il doit, d’une manière ou d’une autre, rendre présent – si possible sans en effacer la différence. Son régime de signification n’est pas mimétique, expressif ou formaliste (les trois modes de la signification musicale), il est « transductif » ou « sonifiant » et, en cela, inséparable de l’âge de l’enregistrement et du changement de paradigme qui l’a accompagné : le fait, historiquement situé entre le milieu et la fin du XIXe siècle, de ne plus penser le son à partir de sa production mais de sa réception par l’oreille (qui convertit en perception auditive les ondes mécaniques qui font vibrer le tympan), en tant qu’effet audible. (8) Est désormais sonore non ce qui est composé, joué ou articulé, mais ce qui est entendu, ce qui comprend, potentiellement, tout ce qui est susceptible d’être converti en sons : les ondes électromagnétiques, les lieux, les orages, le changement climatique, l’activité cérébrale, le taux de dioxyde de carbone dans l’air, etc. L’ordre de l’art sonore n’est autre que le désordre du monde.

Bird & Renoult – Two Tower (extrait 1) from Birdy on Vimeo.

Bastien Gallet

(1) Voir les deux pages suivantes : https://chrischafe.net/oxygen-flute/ et https://ccrma.stanford.edu/~cc/pub/pdf/oxyFl.pdf

(2) J’emprunte cette distinction à Douglas Kahn, Earth Sound Earth Signal. Energy and Earth Magnitude in the Arts, University of California Press, 2013, p. 55.

(3) Voir son article « Soundwalking, Sonification, and Activism », dans The Routledge companion to sounding art, M. Cobussen, V. Meelberg et B. Truax (dir.), Routledge, 2016.

(4) Voir http://www.icad.org/websiteV2.0/Conferences/ICAD2004/papers/polli.pdf

(5) Voir https://www.nasa.gov/mission_pages/chandra/news/new-nasa-black-hole-sonifications-with-a-remix.html

(6) Voir https://lesia.obspm.fr/perso/philippe-zarka/Chants.html

(7) La musique parle, la musique peint. Les voies de l’imitation et de la référence dans l’art des sons, Tome 1. Histoire, Paris, Éditions du Conservatoire, 2023, p. 19-22.

(8) À ce sujet, voir Jonathan Sterne, Une histoire de la modernité sonore, Maxime Boidy (trad.), La Découverte/Éd. de la Philharmonie, 2015.

Photo article © Hicham Berrada

Photos N Point © Andrea Polli

)